AI shows you the answer. Scaffold shows you the reasoning.

Role

Product Designer (Self-initiated, 2026)

Key Outcome

A personal component library exploring a new design vocabulary: separating what AI found from what it concluded.

React, TypeScript, Tailwind CSS and Framer Motion

Reading Time

3 minutes

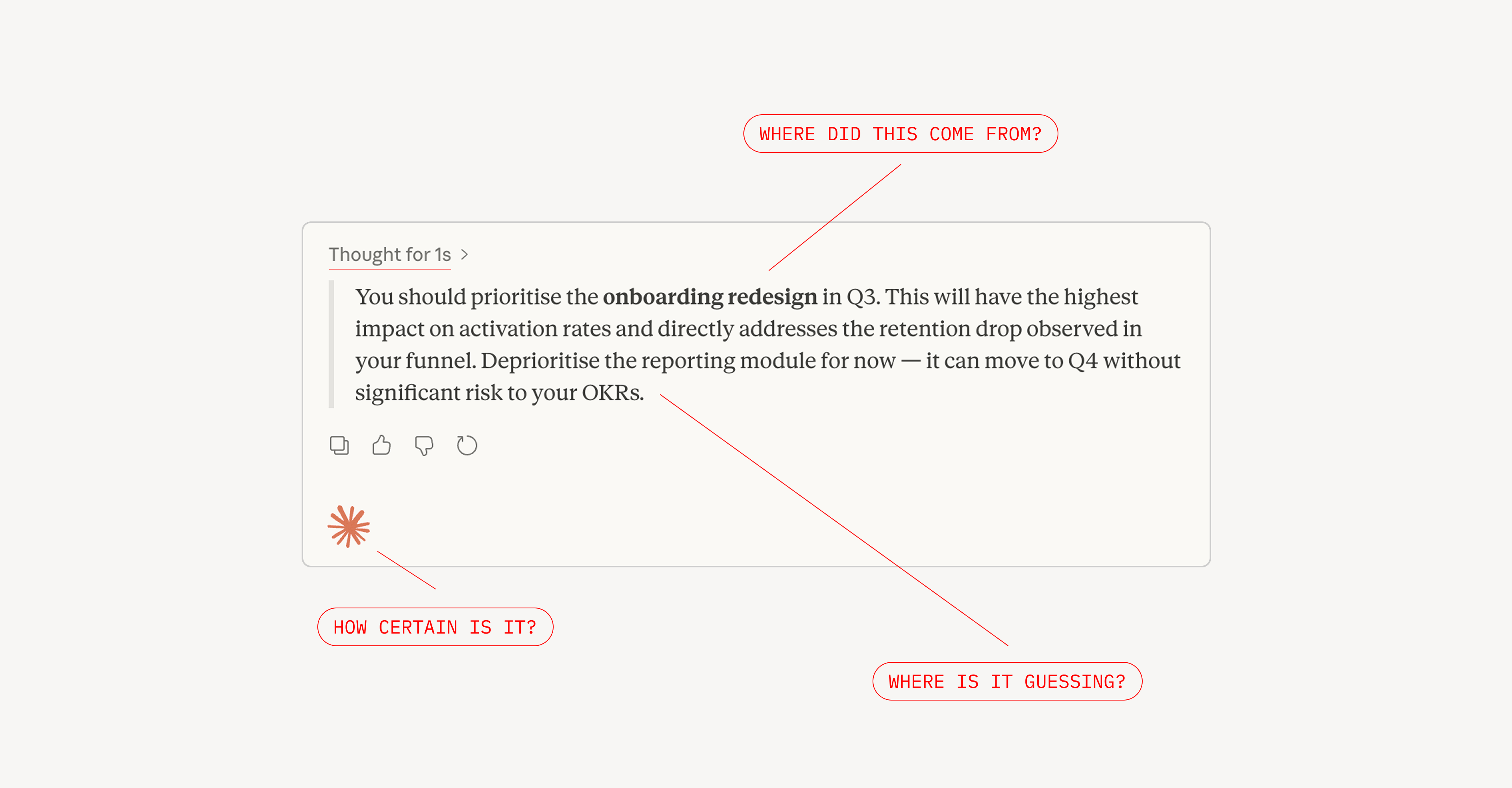

Most AI products ask for trust before they’ve earned it. They present recommendations and nothing to back it. No reasoning, no evidence, no signal of where the AI is certain and where it’s guessing.

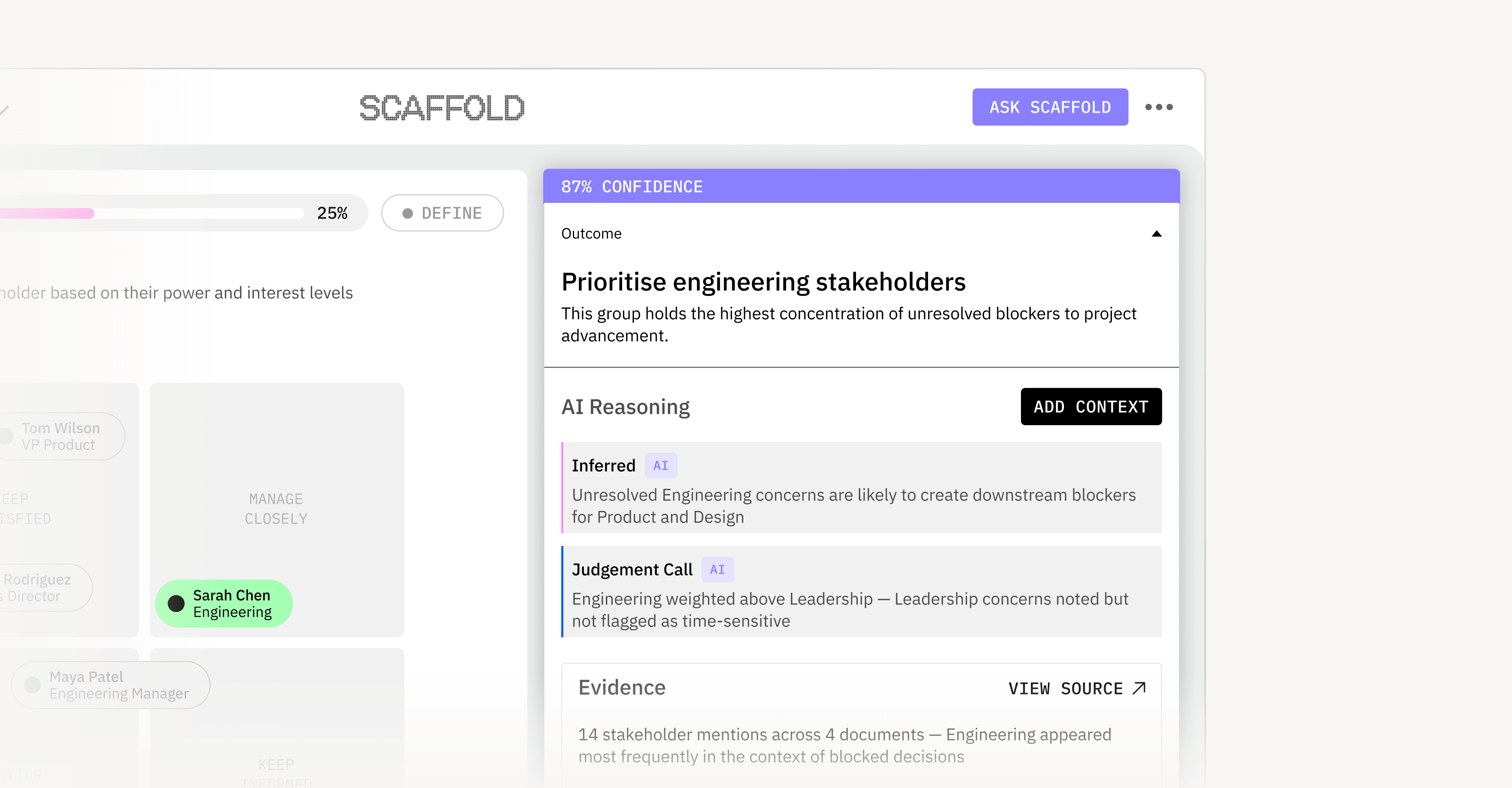

What the AI found vs. what the AI concluded

Findings have evidence such as documents, a data point or a quote. Concluding is inferred. AI finds patterns and makes a judgement call. These require significantly different levels of trust. Scaffold is built around making that difference visible.

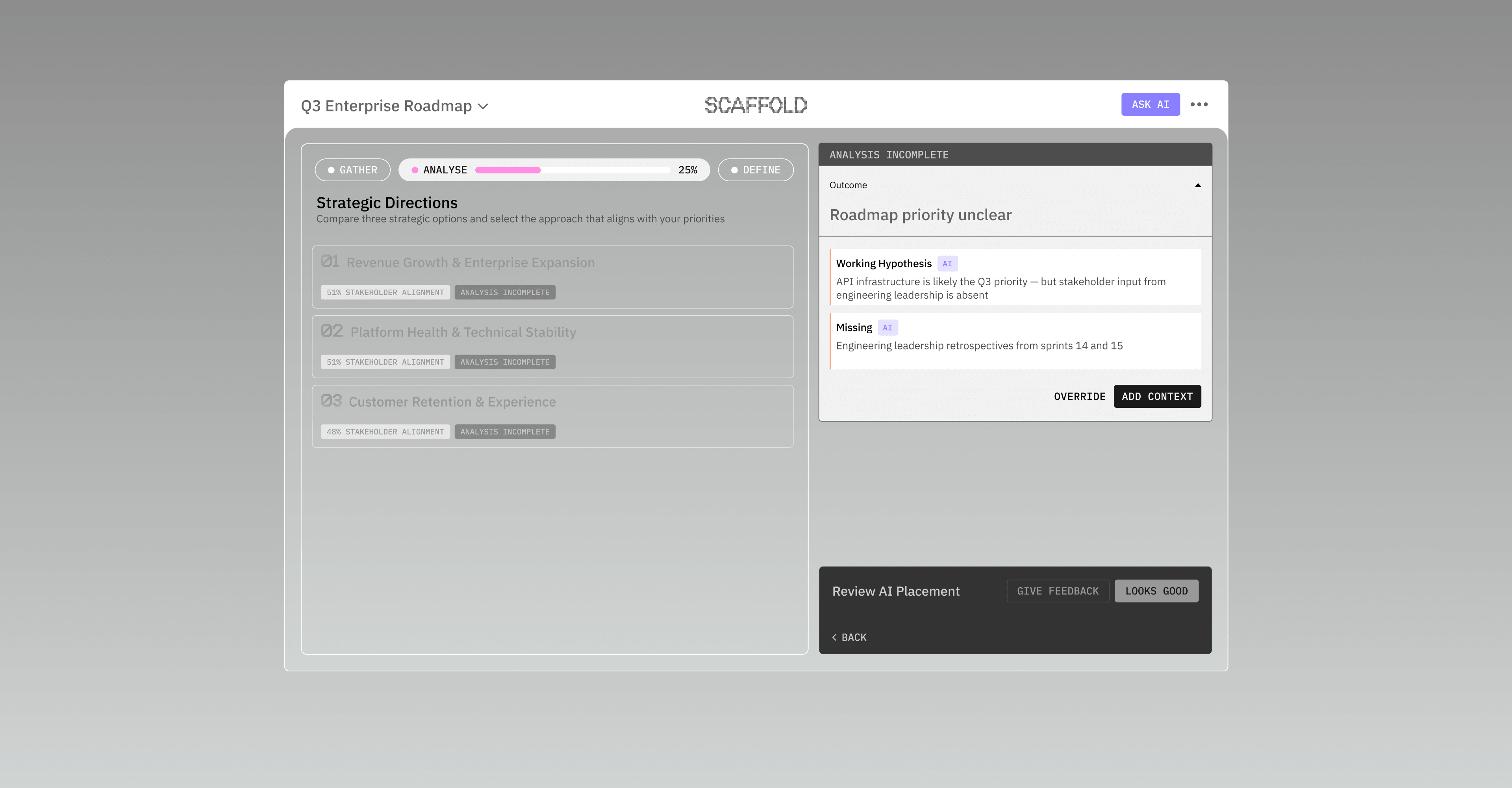

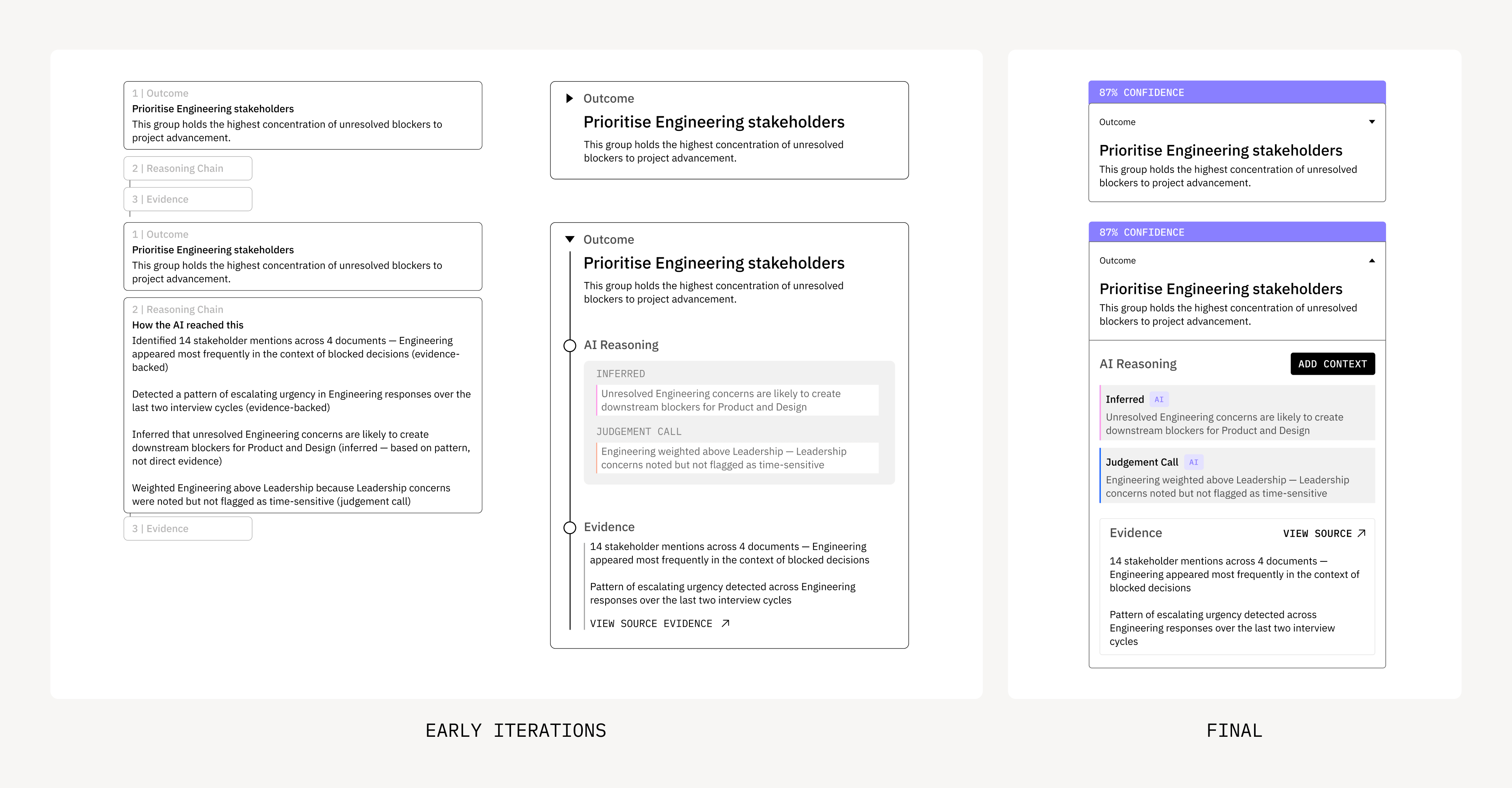

1. Cognitive load on demand

Early designs had three layers of information displayed but was evolved to use progressive disclosure.

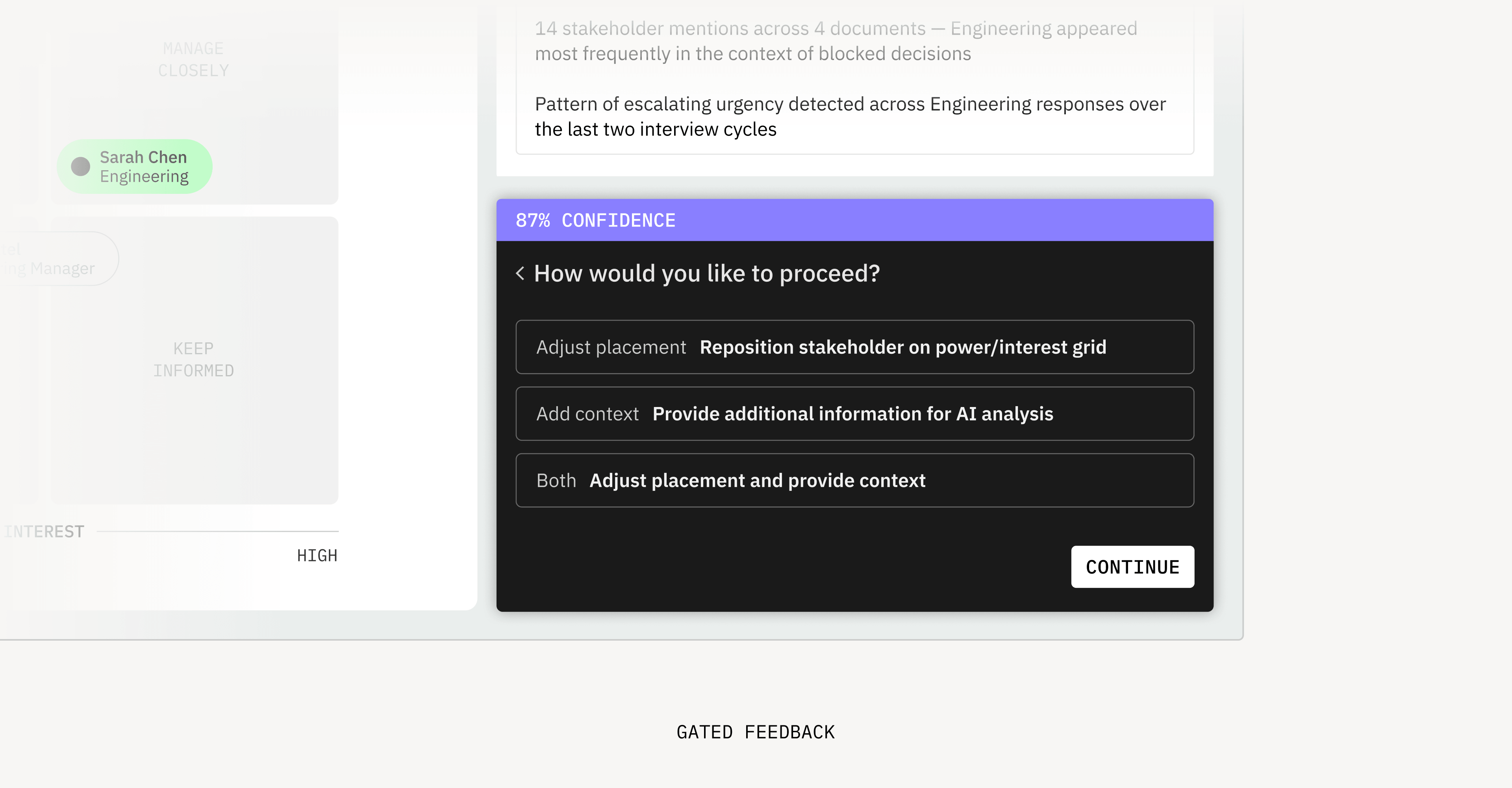

2. Human agency is built in

The AI never auto-advances with a gated component forcing human decision. Override is also always available.

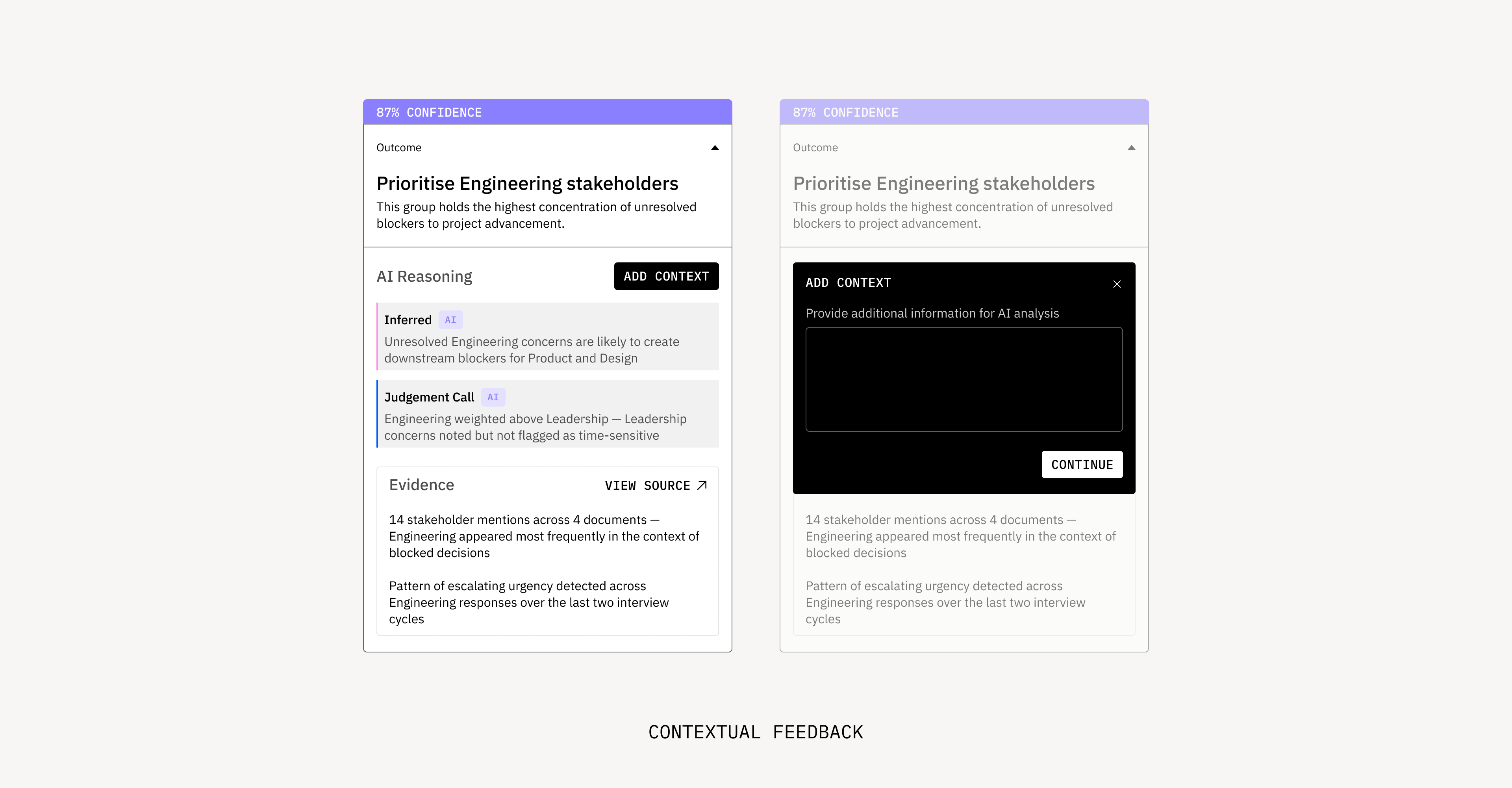

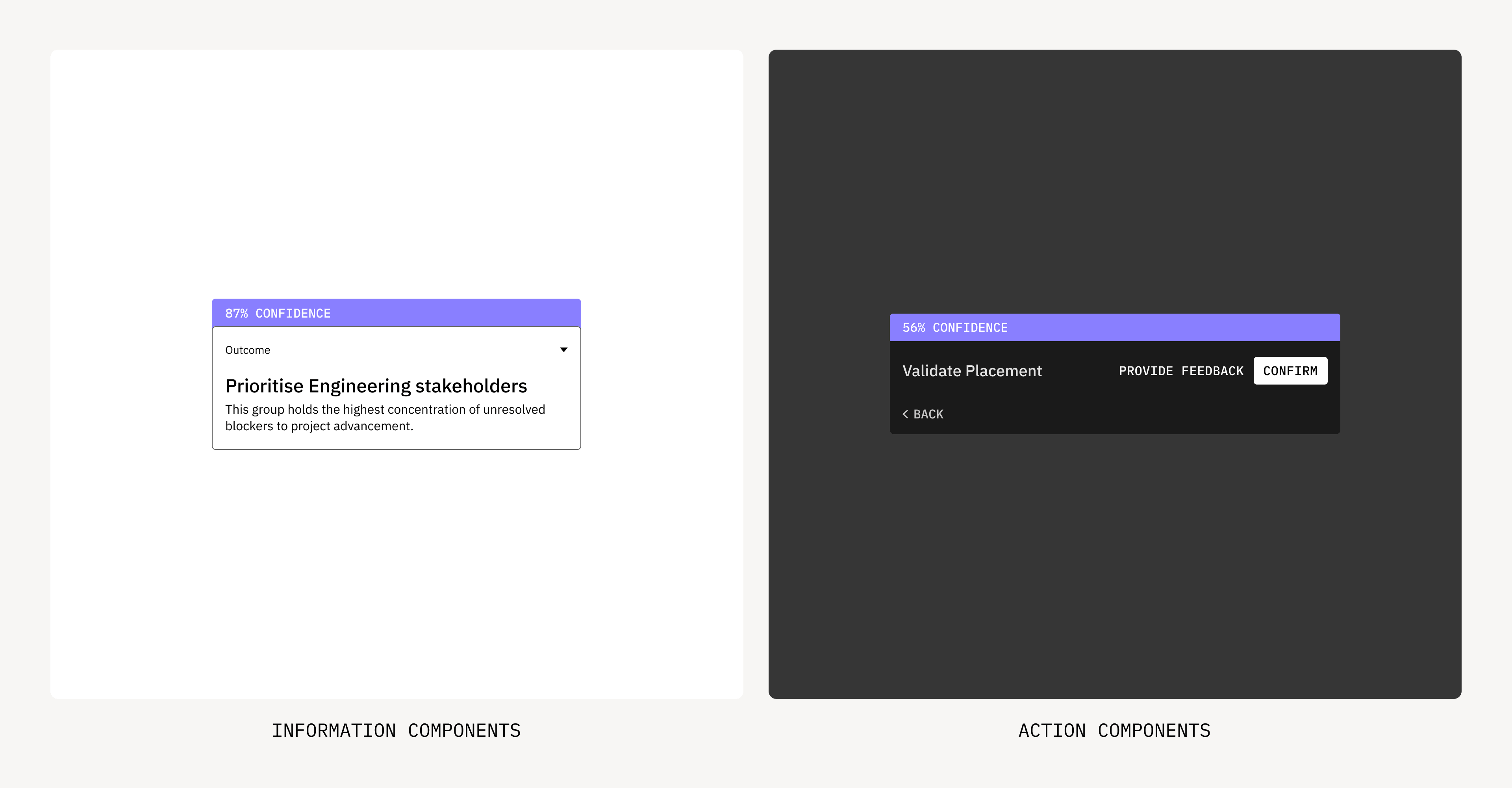

3. Two component families

Visual language is used to seperate out informative components vs action components using light and dark mode.

Reflection

Transparency alone doesn’t build trust. It needs validation loops, confidence communication, and honest uncertainty states working as a system. Designing one component revealed the gaps the others needed to fill.